Pumping Code #7 — Four AWS Agent Services, One Decision: Which Stack Actually Ships?

AWS dropped Agents, Flows, AgentCore, and Strands. Here's your decision framework—before you build the same prototype four times.

Quick question: You walk into the gym, and there are four different squat racks. One’s beginner-friendly with safety bars. One’s got a fancy digital interface. One’s completely customizable but requires assembly. Or training with your own body-weight with Calisthenics. Which one gets you to your lift goal fastest?

Same problem in AWS right now. You need to build an agent, and Bedrock just handed you four different services: Agents, Flows, AgentCore, Strands. All sound similar. All claim to “accelerate your AI agents.” But they’re fundamentally different tools for different jobs—and picking wrong costs you weeks rebuilding.

This issue cuts through the noise. One deep comparison. Real production examples. Code samples from official AWS repos. A decision matrix so you stop prototyping and start shipping.

Let’s get into it.

Master Generative AI — Get Started Today!

From fundamentals to production: learn to build real-world AI applications using LLMs, image generation, RAG, fine-tuning, and agent-based systems.

💡 Hands-on coding labs, production deployment patterns, and architecture insights included.

👉 Enroll in Mastering Generative AI on Udemy

Four Paths to Building Agentic AI on AWS: Your Definitive Guide

Amazon Bedrock now offers four distinct approaches for building AI agents, each optimized for different use cases and developer preferences. The critical distinction: AgentCore and Strands are fully LLM-agnostic, supporting both Bedrock models and external providers like OpenAI and Gemini.

For AWS architects evaluating these services, understanding which path aligns with your requirements—managed versus code-first, single-LLM versus multi-model, visual workflows versus programmatic control—determines both your time-to-production and long-term flexibility.

Amazon Bedrock Agents: Fully managed multi-agent orchestration

Amazon Bedrock Agents is AWS’s configuration-based service for building autonomous agents without managing infrastructure. You define agents through API calls or the AWS Console by specifying foundation models, instructions, action groups (via OpenAPI schemas), guardrails and knowledge bases. The service handles prompt engineering, orchestration, memory management, and monitoring out of the box.

Architecture patterns: Bedrock Agents excels at hierarchical multi-agent collaboration using supervisor and router modes. A supervisor agent coordinates specialist sub-agents—either sequentially or in parallel—breaking complex requests into manageable tasks. In routing mode, straightforward requests go directly to the appropriate specialist, while complex queries trigger the supervisor’s orchestration logic. This mirrors human team structures: an executive assistant delegates to travel advisors and research analysts, each with full access to action groups, knowledge bases, and guardrails.

Production example: A financial services firm built a three-agent system where a Financial Agent (supervisor) coordinates a Portfolio Assistant (creates detailed company analyses) and Data Assistant (queries Bedrock Knowledge Bases with financial documents). The system handles natural language queries like “Create an investment portfolio for tech companies,” returning comprehensive breakdowns with optimization suggestions—all within a serverless, scalable architecture.

When to use: Choose Bedrock Agents when you need rapid deployment without writing orchestration code, tight integration with other Bedrock services, or when business stakeholders need to configure agents through visual interfaces. It’s ideal for customer service automation, insurance claim processing, and scenarios where AWS’s managed experience outweighs framework flexibility.

Code example:

import boto3

import json

# Initialize clients

bedrock_agent = boto3.client(”bedrock-agent”, region_name=”us-east-1”)

bedrock_runtime = boto3.client(”bedrock-agent-runtime”, region_name=”us-east-1”)

# 1. Create Agent

response = bedrock_agent.create_agent(

agentName=”MyFinancialAgent”,

instruction=”You are a financial assistant that can calculate returns and analyze portfolios.”,

foundationModel=”anthropic.claude-sonnet-4-20250514-v1:0”,

idleSessionTTLInSeconds=600

)

agent_id = response[’agent’][’agentId’]

# 2. Create Action Group with function schema

action_group_response = bedrock_agent.create_agent_action_group(

agentId=agent_id,

agentVersion=”DRAFT”,

actionGroupName=”FinancialCalculations”,

description=”Tools for financial calculations and analysis”,

actionGroupState=”ENABLED”,

functionSchema={

“functions”: [

{

“name”: “calculate_roi”,

“description”: “Calculate return on investment for a given principal and return”,

“parameters”: {

“principal”: {

“description”: “Initial investment amount”,

“type”: “number”,

“required”: True

},

“return_percentage”: {

“description”: “Expected return percentage”,

“type”: “number”,

“required”: True

}

}

}

]

},

actionGroupExecutor={

“lambda”: “arn:aws:lambda:us-east-1:${AWS_ACCOUNT_ID}:function:FinancialCalculator”

}

)

# 3. Prepare Agent (required before invocation)

bedrock_agent.prepare_agent(agentId=agent_id)

# 4. Create Agent Alias

alias_response = bedrock_agent.create_agent_alias(

agentId=agent_id,

agentAliasName=”production”

)

agent_alias_id = alias_response[’agentAlias’][’agentAliasId’]

# 5. Invoke Agent

session_id = “user-session-123”

prompt = “What’s the ROI if I invest $10,000 with a 7% return?”

invoke_response = bedrock_runtime.invoke_agent(

agentId=agent_id,

agentAliasId=agent_alias_id,

sessionId=session_id,

inputText=prompt,

enableTrace=True

)Amazon Bedrock Flows: Visual orchestration for complex workflows

Amazon Bedrock Flows provides a drag-and-drop interface for building multi-step generative AI workflows without code. Developers connect nodes—each representing a prompt, agent, knowledge base query, Lambda function, or conditional branch—to create deterministic pipelines. Previously known as “Prompt Flows,” it became generally available in November 2024 with enhanced safety (embedded Guardrails), traceability (complete execution path visibility), and support for long-running workflows up to 24 hours.

Visual workflow capabilities: The Flow Builder enables rapid prototyping through an intuitive canvas where you draw connections between Input, Prompt, Agent, Knowledge Base, Condition, Iterator, DoWhile Loop, and Output nodes. Flows supports both visual creation and programmatic access via SDK for CI/CD integration. Version management allows A/B testing different prompt variations without disrupting production.

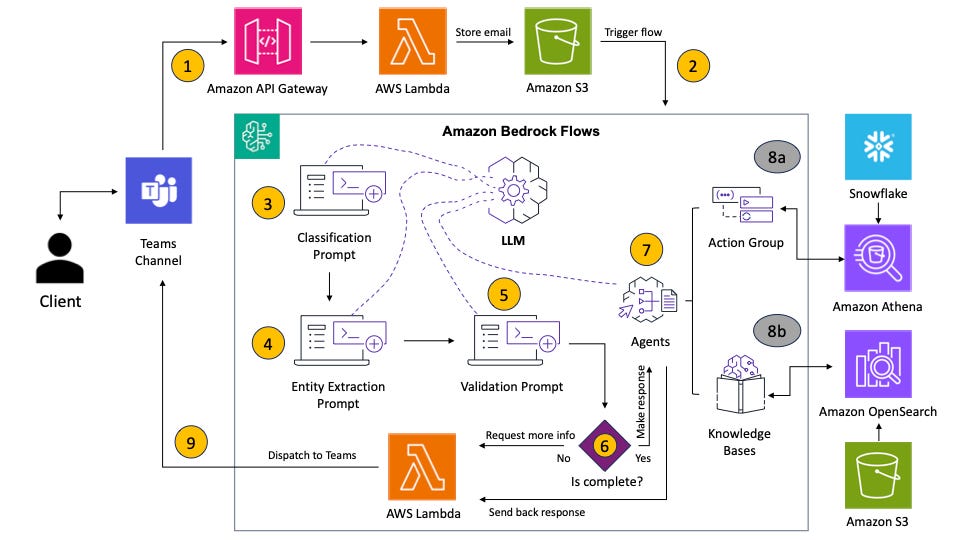

Production example: Parameta Solutions built an email triage system in just two weeks that reduced client resolution times from weeks to days. [Blog] The flow implements: classification prompts identifying inquiry types → entity extraction discovering key data points → validation ensuring completeness → Bedrock Agent queries synthesizing responses from Snowflake via Athena and OpenSearch knowledge bases. Non-technical teams can now understand and adjust workflows, democratizing AI access across the organization.

When to use: Flows excels when you need deterministic orchestration with explicitly stated decision logic, complex conditional branching based on AI outputs, integration of multiple AWS services in coordinated pipelines, or rapid iteration with comprehensive observability. Ideal for document processing, email triage, content generation pipelines, and report generation requiring multiple data aggregation steps.

Visual flow example:

Bedrock AgentCore: Framework-agnostic production infrastructure

Amazon Bedrock AgentCore eliminates the trade-off between open-source flexibility and enterprise infrastructure. It’s a comprehensive platform comprising seven modular, fully-managed services that enable developers to build agents with any framework (LangGraph, CrewAI, Strands, LlamaIndex, OpenAI SDK, AutoGen) and any model (Bedrock models, OpenAI GPT-4, Google Gemini, local Ollama instances).

Seven modular services:

AgentCore Runtime: Serverless execution environment with industry-leading 8-hour support for long-running workloads, complete session isolation using dedicated microVMs, and sub-second cold starts. Supports VPC connectivity and AWS PrivateLink.

AgentCore Gateway: Zero-code tool integration transforming APIs and Lambda functions into MCP-compatible agent tools with semantic discovery for intelligent tool selection.

AgentCore Memory: Fully-managed short-term (session) and long-term memory with built-in strategies for user preferences, semantic facts, and session summaries.

AgentCore Identity: Secure OAuth 2 access management integrating with Amazon Cognito, Okta, and Microsoft Entra ID, enabling agents to access resources on behalf of users.

AgentCore Observability: Real-time dashboards with OpenTelemetry compatibility for Datadog, Dynatrace, LangSmith, Langfuse, and Arize Phoenix integration.

AgentCore Code Interpreter: Secure multi-language code execution in isolated sandboxes for data analysis and visualization.

AgentCore Browser Tool: Managed cloud-based browser runtime with sub-second latency for web automation and session recording.

Production example: Epsilon, part of Publicis Groupe (world’s largest advertising company), built an Intelligent Campaign Automation system managing thousands of personalized marketing campaigns daily. Using AgentCore Runtime for effortless deployment and Observability for operational visibility, they achieved 30% reduction in campaign setup time, 20% increase in personalization capacity, and deployment in days instead of weeks—saving 8 hours per team per week. [YouTube Video] [Case Study]

When to use: Choose AgentCore when moving from prototype to production, requiring multi-framework deployments across teams, implementing enterprise security with session isolation and comprehensive audit trails, building long-running workflows up to 8 hours, or establishing agent-to-agent communication using MCP and A2A protocols. Essential for healthcare reviews, insurance claim processing, and complex business workflows.

Code example:

from bedrock_agentcore import BedrockAgentCoreApp

from strands import Agent

from strands.models import BedrockModel

app = BedrockAgentCoreApp()

# Create a BedrockModel with explicit configuration

bedrock_model = BedrockModel(

model_id=”anthropic.claude-sonnet-4-20250514-v1:0”,

region_name=”us-west-2”,

temperature=0.3,

)

agent = Agent(model=bedrock_model)

@app.entrypoint

def invoke(payload):

“”“Your AI agent function”“”

user_message = payload.get(”prompt”, “Hello!”)

result = agent(user_message)

return {”result”: result.message}

if __name__ == “__main__”:

app.run() Strands SDK: Model-driven multi-agent orchestration

Strands Agents is AWS’s open-source SDK taking a model-driven approach where LLMs act as their own orchestrators rather than following rigid control structures. Released in May 2025, it reached 1.0 in July with over 1 million downloads and battle-tested production use in Amazon Q Developer, AWS Glue, VPC Reachability Analyzer, and AWS Transform for .NET.

Model-driven philosophy: Instead of building complex state machines and predefined workflows, Strands leverages modern LLM reasoning capabilities to let models make intelligent decisions about tool usage and adaptation. Agents require just three components: Model (the LLM providing reasoning), Tools (external functions/APIs), and Prompt (natural language instructions). This eliminates boilerplate while enabling sophisticated behavior.

Four multi-agent primitives:

Agents-as-Tools: Hierarchical delegation where specialist agents become intelligent tools for orchestrator agents, mirroring human team structures.

Handoffs: Explicit transfer of control to humans with full conversation context preservation, ideal for tasks requiring human approval or expertise.

Swarms: Self-organizing collaborative teams with shared memory where agents autonomously coordinate without predefined workflows.

Graphs: Deterministic workflows with conditional routing for compliance requirements and well-defined business processes.

These primitives compose freely—swarms can contain graphs, graphs can orchestrate swarms, enabling gradual adoption from single agents to complex multi-agent systems.

Production example: Drug discovery research assistants for pharmaceutical companies like Genentech and AstraZeneca search multiple scientific databases (PubMed, clinical trials, molecular databases) simultaneously via MCP, synthesize findings, and generate comprehensive reports on drug targets and therapeutic areas. Researchers see real-time progress with streaming, can interrupt mid-process, and benefit from integration with Bedrock Knowledge Bases for institutional research history. Time savings: from weeks of literature review to minutes, covering more sources than humanly possible with full audit trails for regulatory compliance. [Blog]

When to use: Strands excels when building on AWS infrastructure with native service integration, requiring enterprise-grade security and compliance, needing flexibility in model selection (switch between Claude, OpenAI, Gemini, local Ollama without rewriting logic), prototyping complex multi-agent systems with hierarchical or collaborative architectures, or deploying agents in serverless (Lambda), containerized (ECS/EKS), or hybrid environments.

Code example:

from strands import Agent, tool

from strands.models import BedrockModel

@tool

def letter_counter(word: str, letter: str) -> int:

“”“Count occurrences of a letter in a word.”“”

return word.lower().count(letter.lower())

# Create a BedrockModel with explicit configuration

bedrock_model = BedrockModel(

model_id=”anthropic.claude-sonnet-4-20250514-v1:0”,

region_name=”us-west-2”,

temperature=0.3,

)

agent = Agent(model=bedrock_model, tools=[letter_counter])

agent(”How many R’s are in ‘strawberry’?”)

Feature comparison: Choosing the right service

Similarities: The Bedrock foundation

All four services share AWS’s commitment to enterprise-grade security, integration with Amazon Bedrock Knowledge Bases and Guardrails, and serverless infrastructure eliminating capacity management. They support conversational AI patterns, tool/function calling, and memory management for context-aware interactions. Each provides version control mechanisms (Agents: aliases, Flows: versions, AgentCore: deployments, Strands: git-based) and integrates seamlessly with other AWS services like Lambda, S3, and Cognito.

Key differences: Making the choice

The fundamental split: Bedrock Agents and Flows are Bedrock-exclusive. AgentCore and Strands are LLM-agnostic, supporting external models like OpenAI GPT-4, Google Gemini, and custom deployments—critical for organizations with existing model investments or regulatory requirements for specific providers.

Control versus convenience: Bedrock Agents offers the fastest path to production through configuration rather than code, making it ideal when AWS’s managed orchestration meets your needs. Flows provides visual workflow design appealing to non-technical stakeholders while maintaining programmatic access for DevOps. AgentCore and Strands prioritize developer control—you write the orchestration logic, gaining flexibility at the cost of managing more implementation details.

Framework flexibility: AgentCore’s framework-agnostic architecture is its killer feature—teams can use LangGraph for one project, CrewAI for another, and Strands for a third, all deployed on unified infrastructure with consistent observability and security. This eliminates the “framework wars” problem plaguing multi-team organizations. Strands, being an opinionated framework itself, provides more out-of-the-box functionality but requires standardization across teams.

Multi-agent sophistication: Bedrock Agents implements hierarchical patterns with explicit supervisor-collaborator relationships, suitable for well-defined organizational hierarchies. Strands advances the state-of-the-art with four composable primitives enabling self-organizing swarms and dynamic handoffs—better for research tasks requiring emergent coordination. AgentCore enables both by supporting any framework, but you’re responsible for implementing the patterns yourself or choosing a framework that provides them.

Common misconceptions

Misconception 1: “All Bedrock services are the same”. They aren’t. Agents and Flows are tightly coupled managed services, while AgentCore is infrastructure enabling any framework. Conflating them leads to incorrect service selection.

Misconception 2: “Flows is just for non-coders”. While Flows offers visual design, its real power is deterministic orchestration with guaranteed execution order—valuable for compliance-heavy industries requiring auditability regardless of technical skill level.

Misconception 3: “Strands and AgentCore are competitors”. They’re complementary. Strands is the framework/SDK; AgentCore is the production infrastructure. Most production Strands deployments will use AgentCore Runtime for its 8-hour execution windows and session isolation, though Strands runs anywhere Python does.

Strategic recommendations: Building your agent roadmap

Start with Agents or Flows if: You need to demonstrate value quickly (days to weeks), your use case fits Bedrock’s model catalog, you lack ML engineering resources, or stakeholders prefer visual tools. Use Agents for autonomous multi-step workflows; use Flows for deterministic, auditable pipelines.

Choose AgentCore when: You’re moving from prototype to production, require multi-model or multi-framework flexibility, looking for broader datasource integration with OAuth 2.0/MCP/OpenAPI/Smithy, integrate enterprise controls (SSO, credential storage, 8-hour runtimes, comprehensive audit trails), or building agent-to-agent communication systems. AgentCore’s modular services let you adopt incrementally—start with Runtime, add Memory and Observability as needed.

Adopt Strands for: Greenfield projects where you control the technology stack, teams comfortable with code-first approaches, rapid iteration on multi-agent patterns (swarms, hierarchies), or scenarios requiring model switching (e.g., development on Ollama, production on Claude). Strands’ model-driven approach reduces boilerplate compared to LangChain or CrewAI, accelerating development for AWS-fluent teams.

Hybrid approach: Many organizations use multiple services simultaneously. Prototype in Flows for stakeholder buy-in, rebuild critical paths in Strands for flexibility, deploy on AgentCore Runtime for production reliability. Use Bedrock Agents for simple conversational interfaces while reserving AgentCore + Strands for complex multi-agent orchestration. The services aren’t mutually exclusive—they solve different problems along the agent lifecycle.

How AgentCore enables framework choice

AgentCore’s framework-agnostic design addresses a critical enterprise challenge: different teams prefer different frameworks (data scientists love LlamaIndex, ML engineers prefer LangGraph, researchers want Strands), but operating multiple agent platforms creates operational chaos. AgentCore provides unified infrastructure—Runtime, Memory, Identity, Observability—that works identically whether you’re deploying a CrewAI multi-agent system or a LangGraph state machine.

Practical example: A financial services firm has three teams. The fraud detection team uses LangGraph for deterministic state transitions required by regulators. The customer support team uses Strands for its simple agent-as-tools pattern. The research team uses OpenAI’s Agents SDK to leverage GPT’s latest features. All three deploy to AgentCore Runtime, share AgentCore Memory for cross-agent context, and monitor via unified AgentCore Observability dashboards—no team rewrites their agents, yet the platform team maintains a single operational model.

This architecture also future-proofs investments. When a new framework emerges or a team wants to experiment with novel architectures, they integrate it with AgentCore rather than rebuilding infrastructure.

Conclusion: Four pathways, one ecosystem

Amazon Bedrock’s four agentic services represent distinct philosophies: managed convenience (Agents), visual orchestration (Flows), infrastructure platform (AgentCore), and developer framework (Strands). The critical decision axis is LLM flexibility—if Bedrock models suffice, Agents and Flows offer the fastest time-to-value. If you need multi-model support or framework choice, AgentCore and Strands provide enterprise-grade flexibility without sacrificing AWS integration.

For AWS-fluent developers, the sweet spot is often AgentCore + Strands: Strands’ model-driven SDK eliminates orchestration boilerplate, while AgentCore’s production infrastructure (8-hour runtimes, session isolation, comprehensive observability) provides enterprise reliability.

As these services mature—Agents gaining more advanced multi-agent patterns, Flows expanding node types, AgentCore adding services, Strands evolving its multi-agent primitives—expect convergence in capabilities with persistent differentiation in developer experience. Choose based on your team’s skills, timeline pressures, and long-term flexibility requirements. The winning strategy isn’t picking the “best” service; it’s matching service capabilities to specific use cases within your agent portfolio.

🏋️ What You Should Do This Week

Pick your service based on the comparison table above—if you need managed speed, go Agents; if you need visual workflows, go Flows; if you need framework flexibility, go AgentCore + Strands.

Clone one official repo and run a hello-world example:

Reply with your architecture question: Building agents in production? Hit reply with one challenge you’re facing (deployment, observability, tool integration). I’ll deep dive on the best one next issue.

That’s it for Pumping Code #7.

You now have the clarity to choose your agent stack and the code to validate it works. No more decision paralysis—just build, deploy, iterate.

See you in the next rep. 💪

— Puria

Couldn't agree more. This comparison is truly valuable and timely. I'm especialy reflecting on the LLM-agnostic nature of AgentCore and Strands. While appealing for flexibility, what are the subtle long-term architectural overheads or maintenance trade-offs for large-scale systems? This feels like a critical consideration.

This is gold! thanks so much!