The Runtime Is the Moat: OpenAI’s New Agent Harness, on AWS

OpenAI GPT 5.5 & 5.4 on Bedrock, Codex on Bedrock and Bedrock Managed Agents powered by OpenAI

OpenAI shipped the next evolution of the Agents SDK on April 15. Two weeks later, AWS turned around and put GPT‑5.5 and GPT‑5.4 on Amazon Bedrock, plus Codex on Bedrock, plus a managed agent product called Bedrock Managed Agents, powered by OpenAI — running on top of AgentCore.

Two announcements, one pattern. The agent loop is no longer something you write. It’s a product surface — and it’s now portable across clouds.

Let’s unpack what just happened, what the primitives actually are, and why this is the pattern your stack will be built on for the next three years.

The Agents SDK got a real harness

For two years “agent” has meant “a while loop somebody hand-rolled around an LLM call.” That era ended on April 15.

The Agents SDK now ships a model-native harness — a built-in agent loop co-designed with frontier OpenAI models (GPT‑5.5, GPT‑5.4, GPT‑5.3‑Codex). The loop knows how the model wants to think, when to checkpoint, when to call tools, when to spawn a subagent. That’s the harness.

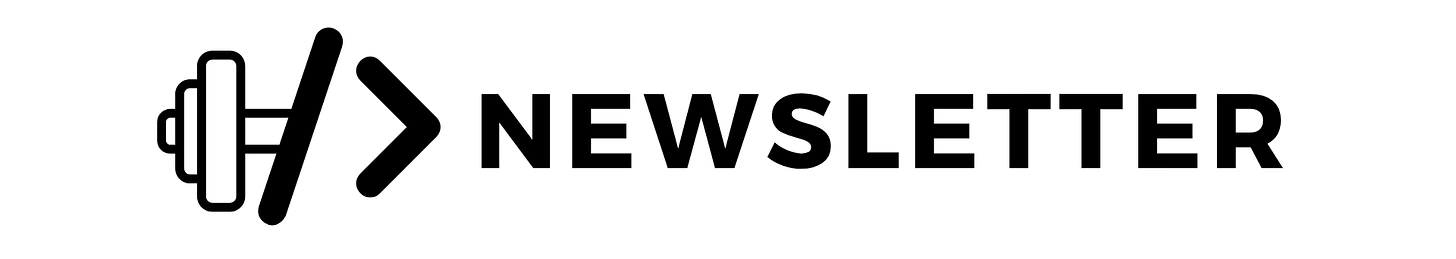

But the bigger architectural move is what comes next: the harness now ships with a native sandbox, and the two are deliberately separated.

The OpenAI primitives you should know:

Harness — the agent loop itself:

Runner,RunConfig, MCP tool use, Skills (progressive disclosure), AGENTS.md (custom instructions),shell(code execution),apply patch(file edits), configurable memory, snapshot + rehydrate for durable runs.Sandbox — the isolated compute layer where the agent’s filesystem ops, shell commands, and tool calls actually execute:

SandboxAgent,SandboxRunConfig,UnixLocalSandboxClient, and aManifestabstraction that mounts local dirs and remote storage (S3, GCS, Azure Blob, R2).Sandbox providers — first-class support for Blaxel, Cloudflare, Daytona, E2B, Modal, Runloop, Vercel.

The architecture is captured cleanly in OpenAI’s two diagrams: “Harness with compute” and the more important one — “Harness separate from compute”. Same loop, two different compute substrates.

Why the split matters:

Security. Credentials live with the harness. Model‑generated code runs in the sandbox. Prompt injection can no longer exfiltrate your secrets through a malicious tool call.

Durability. Sandboxes snapshot. If a container dies, the run rehydrates in a fresh one. Long‑running agents survive infra failures.

Scale. One harness can fan out across many sandboxes, spawn subagents, and parallelize work — every workload in its own blast radius.

That separation is the seam OpenAI just opened up. And that seam is exactly what AWS plugged into.

☁️ OpenAI on AWS — three things shipped at once

On April 28, AWS and OpenAI announced an expanded partnership covering three offerings, all in limited preview:

OpenAI models on Amazon Bedrock — the latest frontier OpenAI models (GPT‑5.5, GPT‑5.4) served through the same Bedrock APIs you already use. IAM, PrivateLink, Guardrails, CloudTrail, KMS, AWS commit billing — every governance lever you have works on day one.

Codex on Bedrock — the Codex CLI, desktop app, and VS Code extension, powered by OpenAI models served from Bedrock. Codex has >4M weekly users; now those users can run on AWS infrastructure that the enterprise security team already approves.

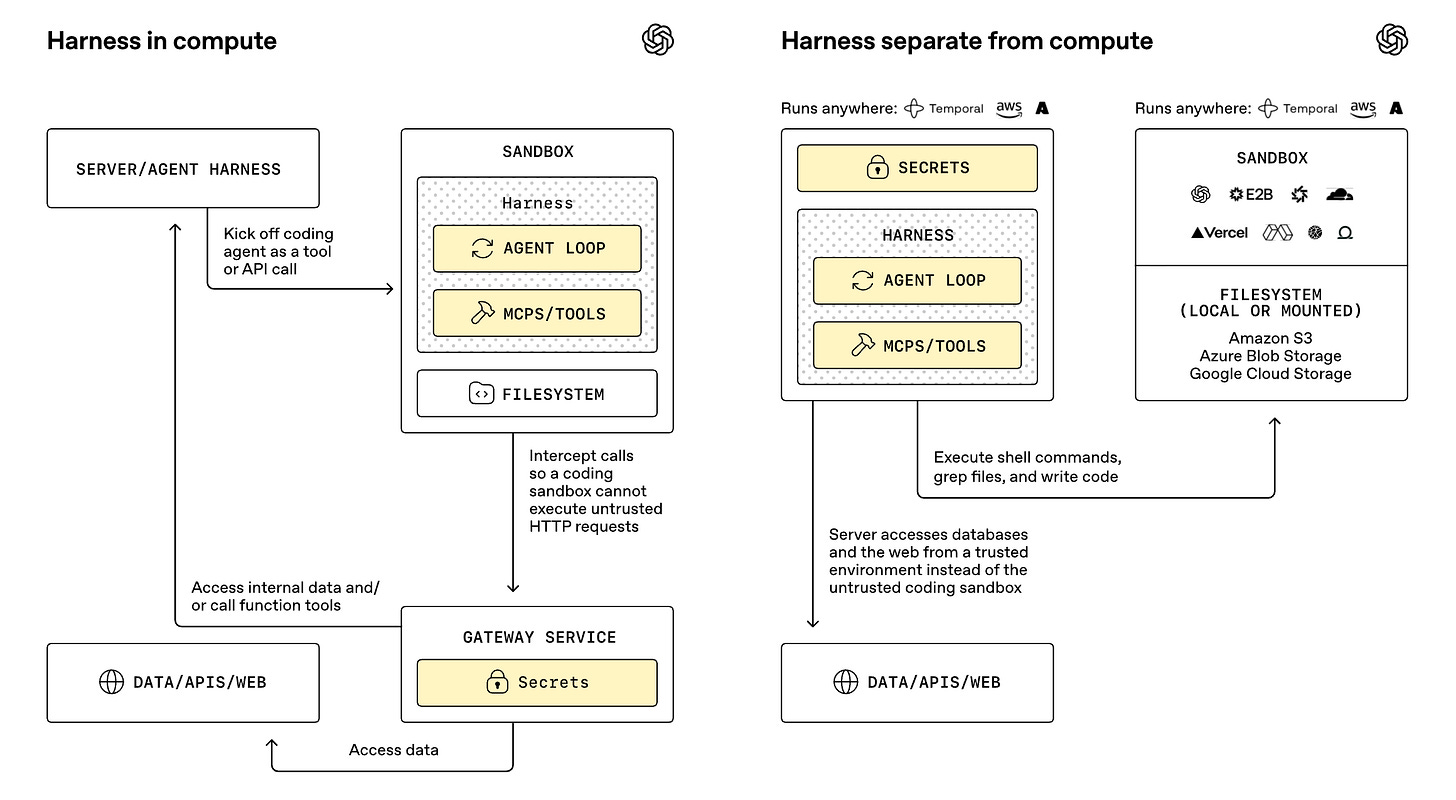

Bedrock Managed Agents, powered by OpenAI — and this is the punchline: a managed agent product built with the OpenAI agent harness, running by default on AgentCore, with all inference on Bedrock and data never leaving AWS.

Box CTO Ben Kus, whose 115,000 customer organizations don’t want to choose between OpenAI’s models and AWS’s posture, summed it up — enterprises get frontier intelligence on infrastructure they already trust. That’s the customer message. The architectural reality is more interesting.

🧠 Bedrock AgentCore, in primitives

If the OpenAI harness is the loop, AgentCore is the runtime that hosts it on AWS. From the Bedrock Managed Agents page and the AgentCore product diagram, the primitives explicitly named in this launch are:

Runtime — the managed compute environment hosting the agent.

Identity — every agent gets its own identity; every action is logged for audit.

Memory — persistent across sessions, scoped per agent.

Authorization‑policy enforcement — who can call which tool, on which data.

Agent and tool discovery — the registry layer for what’s available at runtime.

Observability + evaluation — you can see what your agents did and grade it.

These line up tightly with the OpenAI harness contract — the harness asks for memory, skills, tools, identity, and a sandbox; AgentCore provides every one of those, plus the AWS‑native plumbing the harness doesn’t care about (IAM, PrivateLink, CloudTrail, Guardrails).

🏗️ The full stack, drawn in one place

Here’s how the layers actually compose for Bedrock Managed Agents, powered by OpenAI:

Map that against the OpenAI SDK diagram: the OpenAI harness is the same loop, lifted out of OpenAI’s SaaS plane and dropped into AWS’s plane — with AgentCore playing the role that “your sandbox” plays in OpenAI’s own stack. The seam OpenAI built for portability is the seam AWS used to host the harness on enterprise infrastructure.

That’s the pattern. Remember it.

💪 Why this matters

Three things just got real, fast.

The harness is now a product layer, not your code. OpenAI is asserting that the agent loop is opinionated, model-native, and shipped — not something every team rolls themselves with LangGraph and prayers. Skills, MCP, AGENTS.md, shell, apply patch, Manifest, snapshot/rehydrate — these are the new contracts.

Compute is decoupled from the loop, on purpose. That’s why your harness can run on E2B today and on AgentCore tomorrow without rewriting your agent. The contract between layers is what matters.

Cloud governance is now a first-class agent concern. Every enterprise blocker — “can we use OpenAI without data leaving our perimeter?” — just got an answer: yes, on Bedrock, on AgentCore, with full AWS controls. The Box quote isn’t marketing fluff. It’s a forward indicator of where every regulated company is going.

If you’ve been hand-rolling agent loops, the gravity is now strongly toward adopting these primitives — because the cloud you ship them on, the model you call, and the harness you orchestrate are now three independent decisions instead of one tangled mess.

🚀 What you should do this week

Read the OpenAI Agents SDK post. Specifically the separation of harness and compute diagram — it’s the mental model for everything downstream.

Pin

openai-agents>=0.14.0in a sandboxed test repo. Wire up aSandboxAgentagainst Modal or E2B. See the seam yourself.Sign up for the Bedrock Managed Agents (OpenAI) limited preview. Even if you don’t ship on it, understanding how the OpenAI harness maps onto AgentCore primitives will pay dividends in every architecture review for the next two years.

Audit your current agent stack. Which layer are you writing — the harness, the sandbox, the policy layer, the runtime? Pick the one that’s not differentiated, and replace it with the primitive.

That’s the play. The model will keep getting better. The loop is now opinionated. The runtime is the moat — and right now, the moat just got a lot wider.

📚 Overview of all annoucements

Have a fantastic week & until next time, keep pumping that code! 💪🏽

— Puria Izady,

Founder, Pumping Code